There are plenty of reasons you might consider setting up your own AI image generator. You may want to skip watermarks and ads, create multiple images without paying for a subscription, or even explore image generation in ways that might not align with the ethical guidelines of the service. By hosting your own instance and utilizing training data from companies like Stable Diffusion, you can maintain complete control over what your AI produces.

Getting Started

To kick things off, download the Invoke AI community edition from the provided link. For Windows users, most of the installation is now automatic, so all necessary dependencies should install smoothly. However, you might encounter some challenges if you’re using Linux or macOS. For our tests, we used a virtual machine running Windows 11, with 8 cores from a Ryzen 9 5950, an RTX 4070 (available on Amazon), and 24GB of RAM on a 1TB NVMe SSD. While AMD GPUs are supported, that’s only for Linux systems.

Once the installation is complete, open Invoke AI to generate the configuration files, and then close it. This step is essential as you’ll need to modify some system settings to enable “Low-VRAM mode.”

Configuring Low-VRAM Mode

Invoke AI doesn’t clearly define what “low VRAM” means, but it’s likely that the 12GB RAM on the RTX 4070 won’t be efficient enough for a 24GB model. To adjust this, you should edit the invokeai.yaml file located in the installation directory using a text editor and add the following line:

“`

enable_partial_loading: true

“`

After this change, Windows users with Nvidia GPUs need to adjust the CUDA – Sysmem Fallback Policy to “Prefer No Sysmem Fallback” within the Nvidia control panel global settings. You can tweak the cache amount you want for VRAM, but most users will find that simply enabling “Low-VRAM mode” is sufficient to get things running.

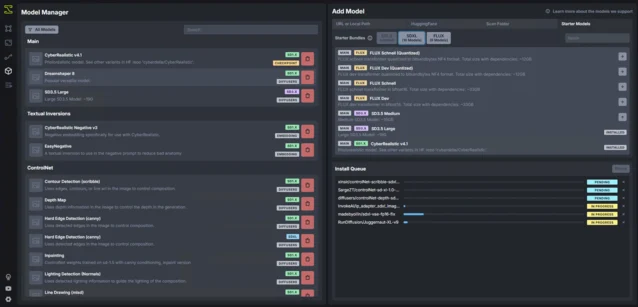

Downloading Models

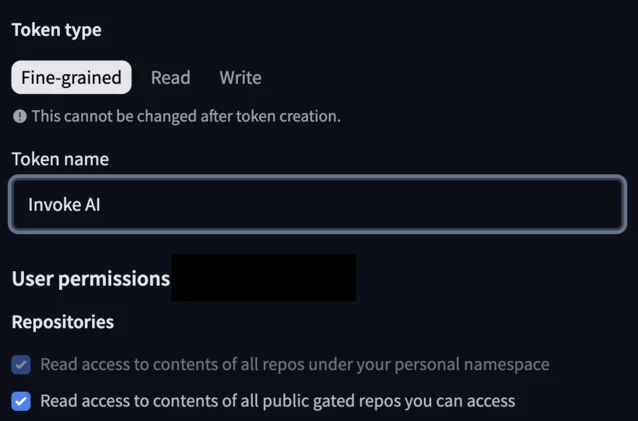

Some models, like Dreamshaper and CyberRealistic, can be downloaded right away. However, to access Stable Diffusion, you’ll need to create a Hugging Face account and generate a token for Invoke AI to pull the model. There are also options to add models via URL, local path, or folder scanning. To create the token, click on your avatar in the top right corner and select “Access Tokens.” You can name the token whatever you prefer, but make sure to grant access to the following:

Once you have the token, copy and paste it into the Hugging Face section of the models tab. You might have to confirm access on the website. There’s no need to sign up for updates, and Invoke AI will notify you if you need to allow access.

Be aware that some models can take up a significant amount of storage, with Stable Diffusion 3.9 requiring around 19 GB.

Accessing the Interface

If everything is set up correctly, you should be ready to start. Access the interface through a web browser on the host machine by navigating to http://127.0.0.1:9090. You can also make this accessible to other devices on your local network.

In the “canvas” tab, you can enter a text prompt to generate an image. Just below that, you can adjust the resolution for the image; keep in mind that higher resolutions will take longer to process. Alternatively, you can create at a lower resolution and use an upscale tool later. Below that, you can choose which model to use. Among the four models tested—Juggernaut XL, Dreamshaper 8, CyberRealistic v4.8, and Stable Diffusion 3.5 (Large)—Stable Diffusion created the most photorealistic images, yet struggled with text prompts, while the others offered visuals resembling game cut scenes.

The choice of model really comes down to which one delivers the best results for your needs. While Stable Diffusion was the slowest, taking about 30 to 50 seconds per image, its results were arguably the most realistic and satisfying among the four.

Exploring More Features

There’s still a lot to explore with Invoke AI. This tool lets you modify parts of an image, create iterations, refine visuals, and build workflows. You don’t need high-end hardware to run it; the Windows version can operate on any 10xx series Nvidia GPU or newer, though expect slower image generation. Despite mixed opinions on AI model training and the associated energy use, running AI on your own hardware is an excellent means to create royalty-free images for various applications.

Source:

Link

Leave a Reply